// About ElevateBio

Who We Are

We are a group of explorers who share an undying passion for constant discovery, new technology, and progress to strengthen and accelerate the development of life-transforming therapies.

Christine Leu

Senior Manager, Financial Planning & Analysis

Jonathan Bairam

Associate Director, Cell Therapy Manufacturing

Our Benefits

Health & Wellness

Medical, vision, and dental insurance covered at 90%

Low deductible, no co-insurance and no copays for mental health visits (for in-network care)

Annual fitness reimbursement

Employer paid for access to wellness apps and programs like Task Human

Financial

Competitive compensation

401(k) retirement plans with a 4% employer match and no vesting period

Employer-paid life insurance and short- and long-term disability insurance

Employer-sponsored flexible spending accounts (FSA) and dependent care account (DCA)

Access to a 1-on-1 financial advisor

Commuter reimbursement benefit

Monthly cell phone stipend

Time Off & Flexibility

Unlimited paid time off, including vacation, sick leave, family leave, personal days, and bereavement

18 paid company holidays, plus an end-of-year holiday shut down

12 weeks of parental leave

Federal Family and Medical Leave Act (FMLA) and Massachusetts Paid Family and Medical Leave (PFML), administered by Unum

Development Journey

Insights Discovery training for every employee to make the most of workplace relationships

Ongoing professional development, including manager training

Access to LinkedIn Learning, a self-guided online education platform

About ElevateBio®

ElevateBio is a technology-driven company built to power the development of transformative cell and gene therapies today and for many decades to come.

The company’s integrated technologies model offers turnkey scale and biotechnological capabilities to power cell and gene therapy processes, programs and companies to their full potential. The ElevateBio ecosystem combines multiple R&D technology platforms – including Life Edit, a next-generation, full-spectrum gene-editing platform; comprehensive cell therapy enabling technologies; RNA engineering platform; and a viral and non-viral therapeutic delivery platform – with BaseCamp®, its end-to-end genetic medicine cGMP manufacturing and process development business, to power the discovery and development of advanced therapeutics.

In addition to enabling a broad breadth of biopharmaceutical companies in the development of their novel cell and gene therapies, ElevateBio is also building a highly innovative pipeline of cellular, genetic, and regenerative medicines. ElevateBio aims to be the dominant engine inside the world’s greatest scientific advancements harnessing human cells and genes to alter disease.

Current Open Positions

Business Development

Cellular Engineering

Senior Associate Scientist, Cell Therapy Discovery, B Cell Therapeutics

Waltham, MA - Wyman

Commercial - BaseCamp BD

Commercial Marketing

Facilities and Engineering

IT

Manufacturing

Process Development

Quality Assurance

Quality Control

QC Specialist I, QC Analytics Cell Therapy (Multiple Positions)

Waltham, MA - Smith

Supply Chain

Technical Operations

Computational Biology

Facilities

Preclinical Development

Technology Development

About ElevateBio

Driven by science and discovery, we are powering the creation of life-transforming cell and gene therapies, at a speed the world demands.

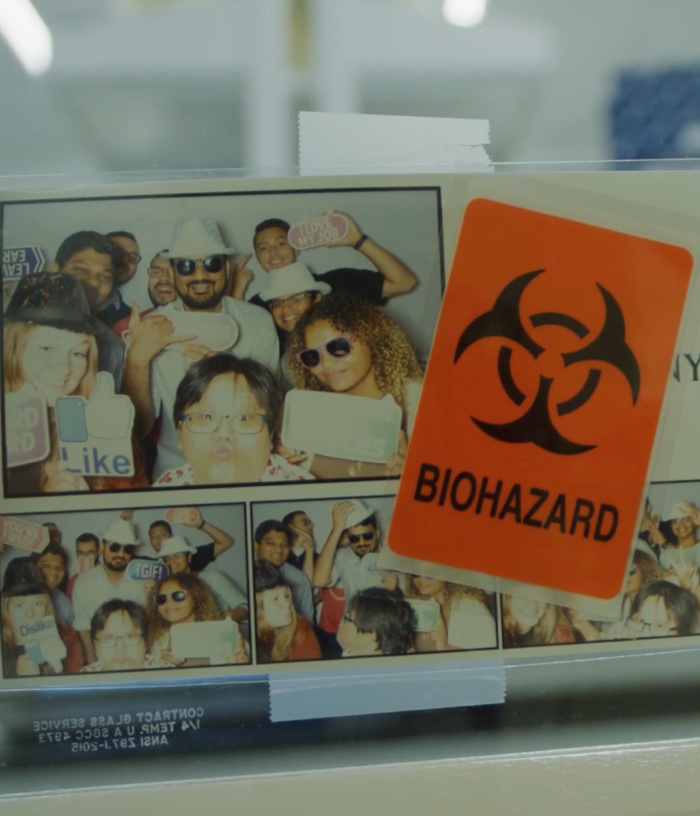

Culture of Expedition

Driven by our values and powered by our employees, we’re climbing to new heights, together.